Stop comparing price per million tokens: the hidden LLM API costs

Summary

Token pricing is misleading: the same input produces 2.65x+ more tokens depending on the model. We got wildly different token counts from identical content using OpenAI, Anthropic, and Google’s official token counting APIs.

Token efficiency varies by content type. Text, JSON, YAML, and tool definitions all tokenize differently. The cheapest provider changes depending on what you’re sending. The only way to know what you’re actually paying is to measure it.

OpenAI has the most efficient tokenizer. On tool-heavy workloads,

claude-opus-4-7costs 5.3x more thangpt-5.4despite list prices being only 2x apart.

Most engineers know they need to evaluate models on their specific task because performance varies. But so does cost: the same input can cost several times more on one provider than another, even when their list prices look similar.

The typical metric for comparing LLM API costs is price per million tokens ($/MTok). Here’s what the major providers charge today:

| Model | $/MTok (input) |

|---|---|

gemini-3.1-pro-preview | $2.00 |

gpt-5.4 | $2.50 |

claude-sonnet-4-6 | $3.00 |

claude-opus-4-6 | $5.00 |

claude-opus-4-7 | $5.00 |

But not all tokens are equal! Different providers use different tokenizers, so the same input produces wildly different token counts.

What is a tokenizer?

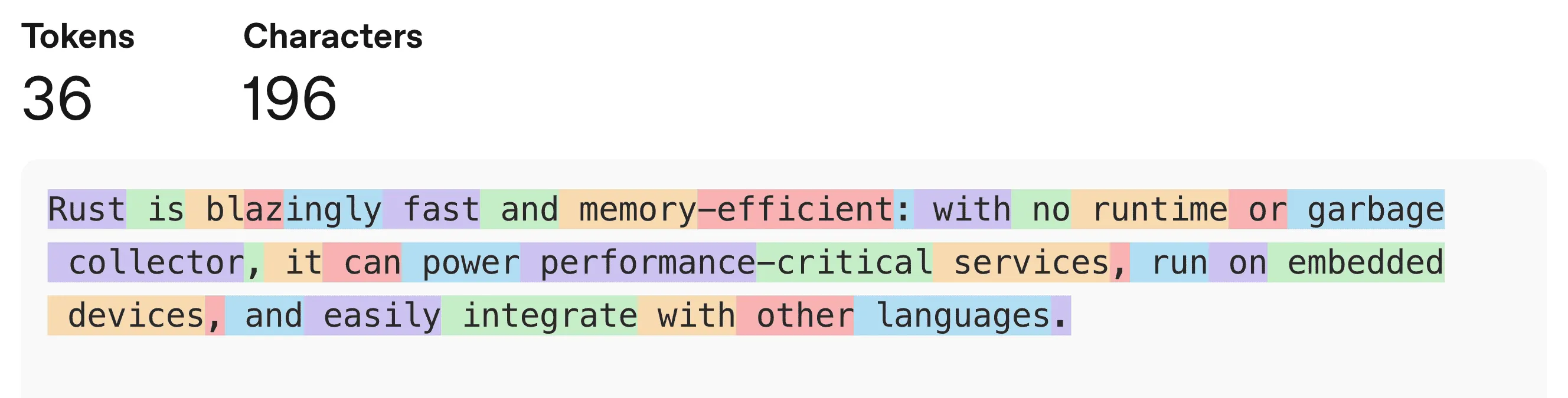

A tokenizer splits text into smaller units (tokens) that the LLM processes. Different tokenizers split the same text differently, producing different token counts.

For example, gpt-5.4 uses the tokenization below:

We sent identical inputs through each provider’s official token counting API and normalized against OpenAI’s:

| Model | Text | YAML | JSON | Tools |

|---|---|---|---|---|

gpt-5.4 | 1.00x | 1.00x | 1.00x | 1.00x |

gemini-3.1-pro-preview | 1.06x | 1.18x | 1.11x | 1.82x |

claude-sonnet-4-6 | 1.17x | 1.25x | 1.22x | 2.06x |

claude-opus-4-6 | 1.17x | 1.25x | 1.22x | 2.06x |

claude-opus-4-7 | 1.57x | 1.53x | 1.70x | 2.65x |

Details about the input data

We used the following input data for this experiment:

| Type | Source |

|---|---|

| Text | The Iliad |

| JSON & YAML | Cloudflare’s OpenAI Spec (trimmed to 2 million characters) |

| Tools | 100 synthetically generated tools (name, description, schema) |

Multiplying list price by tokenizer efficiency gives you what you actually pay to process the same input.

| Model | Text | YAML | JSON | Tools |

|---|---|---|---|---|

gpt-5.4 | $2.50 (1.00x) | $2.50 (1.00x) | $2.50 (1.00x) | $2.50 (1.00x) |

gemini-3.1-pro-preview | $2.12 (0.85x) | $2.36 (0.94x) | $2.22 (0.89x) | $3.64 (1.46x) |

claude-sonnet-4-6 | $3.51 (1.40x) | $3.75 (1.50x) | $3.66 (1.46x) | $6.18 (2.47x) |

claude-opus-4-6 | $5.85 (2.34x) | $6.25 (2.50x) | $6.10 (2.44x) | $10.30 (4.12x) |

claude-opus-4-7 | $7.85 (3.14x) | $7.65 (3.06x) | $8.50 (3.40x) | $13.25 (5.30x) |

The differences are dramatic.

On tool-heavy workloads, claude-opus-4-7 costs 5.3x more than gpt-5.4 even though their list prices are only 2x apart.

The rankings also flip depending on what you’re sending: Gemini is the cheapest option for text and structured data, but becomes 46% more expensive than OpenAI on tool definitions.

This analysis only considers base input token prices. In practice, cost comparisons get even more complex when you factor in prompt caching discounts, long-context pricing tiers, output tokens, and thinking tokens.

When choosing the right model for your task, you should compare both performance and cost in a real setting. A model that looks cheaper on paper might cost several times more once you account for how it counts tokens.

The only way to know what you’re actually paying is to measure it.

Our 11Kopen-source LLM gateway tracks real-world token usage and cost across providers. You can configure multiple variants and track usage and costs side by side.